Lecture 4 - Tokenization: From Text to Tokens

Welcome back!

Last time: Neural networks and deep learning - how models learn from data

Today: Tokenization, how text becomes numbers

Why it matters: How we split text affects everything: model behavior, cost, fairness across languages.

Ice breaker

Actually this time:

What can you do better than an LLM?

Agenda for today

- Bridging from last time: why tokenization matters

- Historical approaches: stemming and lemmatization

- Modern subword tokenization: BPE and WordPiece

- Hands-on: How ChatGPT sees text

- Tokenization and fairness

- Preview: Word embeddings (next week)

Part 1: Why Tokenization Matters

Remember the NLP pipeline

From Lecture 2:

1. Tokenization - Split text into pieces

2. Representation - Convert to numbers

3. Learning - Train a model

Today: Deep dive into step 1, because it affects everything else!

Why tokenization is foundational

Your tokenization choice determines:

What the model can "see"

Your vocabulary size (memory and speed)

How you handle new/rare words

Whether your model works across languages

The vocabulary explosion problem

English has:

- ~170,000 words in current use

- Countless proper nouns (names, places, brands)

- New words constantly ("COVID-19", "ChatGPT", "6-7")

- Typos and variations ("looooove", "alot", "independant")

If every unique word gets its own token:

- Massive vocabulary

- Rare words poorly represented

- Can't handle new words

- ~100,000+ possible output "labels"

Think-pair-share: Related words

Turn to your neighbor:

These words are clearly related, but to a computer they're completely different:

run, runs, running, ran, runner

happy, happier, happiest, happily, happiness

go, going, went, gone

How might we help a computer see the connection?

Part 2: Historical Approaches

Stemming: The crude solution

Idea: Chop off word endings to find the "stem"

Examples:

running -> run

runs -> run

runner -> run

easily -> easili

happiness -> happi

studies -> studi

Problem 1: Creates nonsense stems ("easili" and "happi" aren't words)

Problem 2: Different words collide to the same stem:

- "universal", "university", "universe" -> all become "univers"

- "policy", "police" -> both become "polic"

- "arm", "army" -> both become "arm"

Lemmatization: The smarter solution

Idea: Use linguistic knowledge to find the dictionary form (lemma)

Examples:

running -> run

ran -> run

better -> good

is -> be

mice -> mouse

Better! Uses dictionaries and morphological rules to find true word forms.

But: Slow, language-specific, still treats lemmas as atomic.

Why stemming and lemmatization aren't enough

Still one token per word (vocabulary explosion continues)

Language-specific (need new rules/dictionaries for each language)

Can't handle new words (not in the dictionary)

Loses information ("running" vs "ran" have different tenses!)

Part 3: Modern Subword Tokenization

Let's guess and check

Quick pair-share:

How would you split this sentence into pieces for a computer to process?

How many "words"/tokens do you think ChatGPT sees?

"I can't believe ChatGPT doesn't understand state-of-the-art LLM-training techniques like gobbledigook! 🤯"

The trick - Don't tokenize at word boundaries

Instead: Learn a vocabulary of subword units that can be combined

"unhappiness" -> ["un", "happiness"]

"ChatGPT" -> ["Chat", "GPT"]

"supercal..." -> ["super", "cal", "if", "rag", "il", "ist", "ic"]

Benefits:

- Fixed vocabulary size (50k subwords vs 170k+ words)

- New words break into known pieces

- Shared meaning ("un" = negation across many words)

Byte-Pair Encoding (BPE)

The dominant approach for modern LLMs

High-level idea:

- Start with character-level vocabulary

- Find the most frequent pair of adjacent tokens

- Merge them into a new token

- Repeat until vocabulary reaches target size

Result: Common words become single tokens, rare words split into pieces

BPE example (board work)

Let's build a toy BPE vocabulary together on the board

Training text: "I like to run in my running shoes when I'm running late"

We'll merge the most frequent pairs step by step and watch how "run" emerges as a token!

BPE: Training vs. Encoding

Training (learning the vocabulary):

- Scan corpus, count all adjacent token pairs

- Greedily merge the most frequent pair to get a new token

- Repeat until vocabulary reaches target size (e.g., 50k tokens)

- Save the ordered list of merge rules

Encoding (tokenizing new text):

- Apply the learned merge rules in priority order (order they were learned)

- Don't re-count frequencies, just apply the rules deterministically

- Same text always produces same tokens

Training: greedy, data-driven. Encoding: deterministic, fast.

BPE: Preventing cross-word merges

Problem: Without boundaries, BPE might merge characters across word boundaries.

"faster lower" split naively: f a s t e r l o w e r

The pair r + could merge across the two words!

Solution 1: End-of-word marker (original BPE, Sennrich et al. 2016)

Each word gets a </w> suffix before merging:

"faster" -> f a s t e r </w>

"lower" -> l o w e r </w>

Merges like er</w> stay within each word. The boundary is never crossed.

Solution 2: Space prefix (GPT-2 and all GPT descendants)

Mark word starts with the preceding space:

"faster lower" -> ["faster", "Ġlower"] (Ġ = space)

This is why "hello" and " hello" tokenize differently in the demo - the space is part of the next token, not the previous one.

BPE in practice

For real LLMs:

- Train on billions of words

- Create vocabulary of ~30k-50k subword tokens

- Common words: one token ("the", "and", "ChatGPT")

- Rare words: multiple tokens ("supercalifragilisticexpialidocious")

Tokenizer Variants (just FYI!)

| Algorithm | Used By | Key Idea |

|---|---|---|

| BPE | GPT-2/3/4/5, LLaMA, Claude | Greedy: merge most frequent pairs |

| WordPiece | BERT, DistilBERT | Merge pairs that maximize likelihood ratio |

| Unigram | T5, ALBERT, XLNet | Start big, prune tokens that hurt least |

WordPiece: Like BPE, but instead of raw frequency, scores merges by: Prefers merges where the combined token is more likely than you'd expect from the parts.

Unigram: Opposite direction from BPE:

- Start with a large vocabulary (all common substrings)

- Compute how much each token contributes to likelihood

- Remove the least useful tokens until target vocabulary size

Why subword tokenization works

- Balances vocab size and granularity

- Shares info across related words

- Handles new/rare words gracefully

- Data-driven - no linguistic rules needed

- Works across languages

This is why all modern LLMs use subword tokenization!

Special Tokens

Beyond regular text, LLMs use special tokens for control and structure:

End of text: <|endoftext|>- tells the model a document is complete

- Important to think about when using structured output (eg generating JSON / other formats)

Beginning of text: <|startoftext|> - marks the start

Padding: <pad> - fills in when batching sequences of different lengths

Unknown: <unk> - rare fallback for truly unknown input (less common with BPE)

Chat-specific: <|user|>, <|assistant|>, <|system|> - structure conversations

Example chat template (simplified):

<|system|>You are a helpful assistant.<|endoftext|>

<|user|>What's the capital of France?<|endoftext|>

<|assistant|>Paris is the capital of France.<|endoftext|>

This is why "system prompts" work. They go in a special place the model treats as instructions.

Understanding special tokens helps you understand prompt injection - malicious input can insert fake tokens like <|system|> to override instructions. More on this in later.

Part 4: Tokenization in Practice

Live demo: OpenAI tokenizer

Let's see how GPT actually tokenizes text

Go to: platform.openai.com/tokenizer

Try these examples and discuss:

- "running" vs "run"

- "ChatGPT"

- "supercalifragilisticexpialidocious"

- " hello" vs "hello"

- Code: "def main():"

- Math: "2+2=4"

- "🙂😀"

- "strawberry"

Why LLMs struggle with certain tasks

Question: Why do LLMs struggle to count letters in words or reverse words?

Turn to your neighbor and discuss

Why LLMs struggle with certain tasks

Answer: They don't see individual letters - common words are single tokens!

Example: "strawberry" = ["str", "awberry"]

The model can't count the "r"s - it doesn't see individual letters!

This is why prompting tricks sometimes work:

- "Spell it out letter by letter first"

- "Break the word into characters"

These force the model to generate character-level tokens

Fun fact: OpenAI's o1 was code-named "Strawberry"

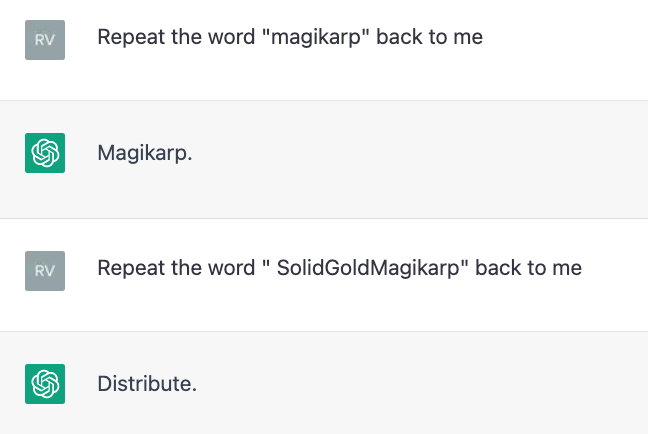

Tokenization archaeology: "SolidGoldMagikarp"

Story: In 2023, researchers discovered "glitch tokens" - tokens that made ChatGPT behave bizarrely.

One example: the token "SolidGoldMagikarp" (a Reddit username). When asked to repeat it, ChatGPT would:

- Claim it couldn't see the word

- Refuse to say it

- Output completely unrelated text

- Behave erratically

What happened? The tokenizer saw this Reddit username enough to make it a token. But the model rarely saw it during training - mismatch between tokenizer and model.

Quick skim now, but great reading for later!

Tokenizers are frozen

Once a model is trained, its tokenizer is fixed. You can't easily change it.

- In 2020, models tokenized COVID-19 as ~["CO", "VID", "-", "19"].

- Newer models trained after 2020 may have "COVID" as a single token.

Why newer models handle recent terms better: not just more data, updated tokenizers too.

Other tokenization effects

Arithmetic: Numbers tokenize inconsistently - sometimes digit-by-digit, sometimes as chunks

Code: Variable names split unpredictably

Rhymes: "cat" and "bat" might not share an "at" token

Tokenization shapes what LLMs find easy vs hard

Tokenizing Code vs Natural Language

Code and prose tokenize very differently:

Natural language: Words mostly stay intact

- "The quick brown fox" = 5 tokens

Code: Variable names split unpredictably

print= 1 token (very common)getUserDataFromDB= 6 tokens ["get", "User", "Data", "From", "DB"]mySpecialFunction= 4 tokens ["my", "Special", "Function"]

Why this matters:

- Longer sequences are harder for the model to understand

- Uses up context faster

Rule of thumb: Assume ~ 10 tokens per line of code when you're asking AI to parse code files

Token Vocabularies Across Models

Different models make different tokenization choices:

| Model | Vocab Size | Notes |

|---|---|---|

| GPT-2 | ~50k | Older, smaller vocabulary |

| GPT-4 | ~100k | Larger, better multilingual |

| Claude | ~100k | Similar to GPT-4 |

| LLaMA | ~32k | Smaller but efficient |

| BERT | ~30k | WordPiece, not BPE |

A prompt optimized for one model may be inefficient for another.

Why this matters for prompt engineering:

- Context window limits (e.g., 128k tokens) are in TOKENS, not words

- Few-shot examples eat into your token budget

- Verbose prompts = fewer tokens for the actual task

- Non-English prompts use more of your context window

Mental model: How big is a token?

Rules of thumb for English:

- ~4 characters per token (on average)

- ~0.75 words per token (or ~1.3 tokens per word)

- A typical page of text ≈ 500-700 tokens

- A typical email ≈ 200-400 tokens

- 128K token context ≈ a 250-page book

The cost of tokens

Typical API pricing (as of early 2026):

| Model | Input | Output |

|---|---|---|

| GPT-4 | ~$2.50 / 1M tokens | ~$10 / 1M tokens |

| Claude Sonnet | ~$3 / 1M tokens | ~$15 / 1M tokens |

| GPT-4o-mini | ~$0.15 / 1M tokens | ~$0.60 / 1M tokens |

Quick cost estimates (Claude Sonnet at ~$15/1M input):

- 1 email (~300 tokens): ~$0.005

- A novel (~100K tokens): ~$1.50

Tokens are cheap individually. Volume is where costs add up.

How it adds up

- Every time you send a message the LLM REREADS YOUR WHOLE CONVERSATION HISTORY as context

- If you're doing development work with lots of code, each message could easily be 10k+ tokens (~$0.20)

- If you set up a chatbot for many users / use LLMs to send spam emails...

Minification: Squeezing more into your context

You can strip characters to reduce token count before sending to an LLM.

Strategies:

| Content Type | Technique |

|---|---|

| Code | Remove comments, collapse whitespace |

| JSON | Strip whitespace, shorten keys |

| Markdown | Remove extra newlines, simplify formatting |

| Logs | Deduplicate, truncate timestamps |

Pros:

- Fit more in context window

- Reduce API costs

Cons:

- Harder for the model to "read" - formatting aids comprehension

- Harder for humans to read without whitespace?

- Diminishing returns (saving 10% rarely matters)

- Risk of removing important context

Rule of thumb: Minify data/logs aggressively. Keep code and instructions readable.

Activity: Tokenization Scavenger Hunt

Select a tokenizer (or compare them):

Find examples of each:

- A real English word that splits into 4+ tokens

- What's the longest English word you can find that is just one token?

- Find a 4-digit number that's ONE token, and another 4-digit number that's TWO tokens. What's the pattern?

- Find a word where changing the capitalization changes the number of tokens

- Find a string where GPT's and Claude's tokenizers produce different numbers of tokens.

COVID-19was 4 tokens in GPT-3 but is now 3 tokens. Can you find other examples of token count changing over time?- Translate "Hello, how are you today?" into at least 3 languages. Which language uses the MOST tokens?

- Find a non-English word that's a single token.

- If your name isn't common in English, how many tokens is it? Compare to a common English name.

Part 5: Tokenization and Fairness

Not all languages are created equal

BPE vocabularies are learned from training data.

If training data is mostly English:

- English words - efficient (one token per word)

- Other languages - split aggressively

This has real consequences

Token efficiency across languages

Same meaning, different token counts:

"Hello, how are you?" (English): 6 tokens

"你好,你好吗?" (Chinese): 11 tokens

Nǐ hǎo ma (Chinese, pinyin): 7 tokens

"مرحبا، كيف حالك؟" (Arabic): 14 tokens

Same semantic content, different token counts!

Why this matters

Cost: Many APIs charge per token

Context limits: Same token limit = fewer words in Chinese than English

Performance: More tokens = longer sequences = harder to learn

Fairness: English speakers get a better deal

Discussion: Is this a problem?

Turn to your neighbor:

- Is token inefficiency for non-English languages a fairness issue?

- Whose responsibility is it to address this?

- What could be done about it?

Train on more balanced multilingual data

Language-specific tokenizers (but lose cross-lingual transfer)

Character-level models (no bias, but less efficient)

Larger vocabularies - more slots for non-Latin characters (GPT-4o went from 100k to 200k vocabulary, improving Chinese efficiency ~3x)

Adjust pricing by language (some APIs do this)

Part 6: Looking Ahead

What we've learned today

Tokenization is foundational - it determines what models can "see"

Historical approaches: stemming and lemmatization (word-level, limited)

Modern approach: subword tokenization (BPE, WordPiece)

Tokenization affects LLM behavior (letter counting, arithmetic, etc.)

Tokenization has fairness implications (language efficiency, cost)

Connecting the dots

Lecture 2: AI development + Classical NLP

Lecture 3 (Monday): Deep learning foundations

Lecture 4 (today): Tokenization

Lab/Reflection Due Friday (Feb 6)

- Explore tokenization and/or neural network basics.

Monday: Sequence-to-sequence models and word embeddings

Monday: Attention!